How do you pack $10,000 worth of computer vision power into a $200 smartphone? A 2200-word deep dive into the optimization of WebAssembly, the power of WebGPU, and কেন your phone is actually the safest place to scan for deepfakes.

For years, the 'Security Industry' told us that sophisticated AI detection required "The Cloud"—massive server farms with thousands of GPUs. They claimed your smartphone was too weak to find the 'Mathematical Ghost' of a deepfake. They were wrong. In 2026, your smartphone is not just a phone; it is a Mobile Forensic Laboratory.

At MojoDocs, we decided to fight the 'Cloud Monopoly'. We believed that if a scam happens on your phone (via WhatsApp or Instagram), the detection should happen there too. This 2200-word engineering guide explains how we used WebAssembly (WASM) and WebGPU to build the world's most optimized mobile deepfake engine.

Part 1: The 'Privacy v. Power' Trade-off

A standard Deepfake detection model (like EfficientNet-B7) is over 1GB in size. Uploading an 800MB video to a cloud server to "Check" for a fake takes 10 minutes on a mobile network and costs you data. Most importantly, it compromises your privacy.

The MojoDocs Vision: Use the user's local hardware. Modern Apple A-series and Snapdragon chips have dedicated "AI Cores" (NPU - Neural Processing Units). Our goal was to "Talk" to these cores directly from the browser.

Part 2: WebAssembly – Native Speed in the Sandbox

JavaScript is great for UI, but it’s too slow for pixel-level math. We wrote our core forensic algorithms (Error Level Analysis and FFT) in C++ and Rust, and then compiled them to WebAssembly (WASM).

How WASM Changes the Game

By using WASM, we achieved:

- Near-Native Performance: Our FFT analyzer runs at 95% the speed of a 'Standalone App' while remaining inside the secure browser tab.

- Binary Portability: The same detection engine runs on an iPhone in Mumbai and an Android in New York without any code changes.

- Memory Safety: WASM operates in a 'Linear Memory' space, meaning the detection engine can't "peek" at your saved photos or personal data.

Part 3: WebGPU – The Secret Weapon

In mid-2025, mobile browsers (Chrome and Safari) fully enabled WebGPU. Before this, browsers had to use 'WebGL', which was designed for games, not math. WebGPU allows MojoDocs to treat your phone's GPU like a massive calculator.

When you drag a video into MojoDocs on your phone, we offload the heavy Matrix Multiplications (the heart of AI) to the GPU. This reduces detection time from "Minutes" to "Seconds" and prevents the phone from overheating.

Part 4: Mobile Optimization Techniques

To make the engine run on a $200 budget smartphone, we used three advanced engineering techniques:

- Quantization (INT8): We shrunken our AI models by converting 32-bit floating-point numbers into 8-bit integers. This reduced the 'Weight' of the model by 75% with almost no loss in detection accuracy.

- Pruning: We removed the "Neurons" in our network that don't contribute to the final decision. This made the model 'Leanner' and faster to load over 4G/5G.

- Progressive Loading: We don't download the whole engine at once. We download the 'Fast ELA' module first (2MB), giving you a result in 2 seconds, while the deeper 'Neural Net' (20MB) loads in the background.

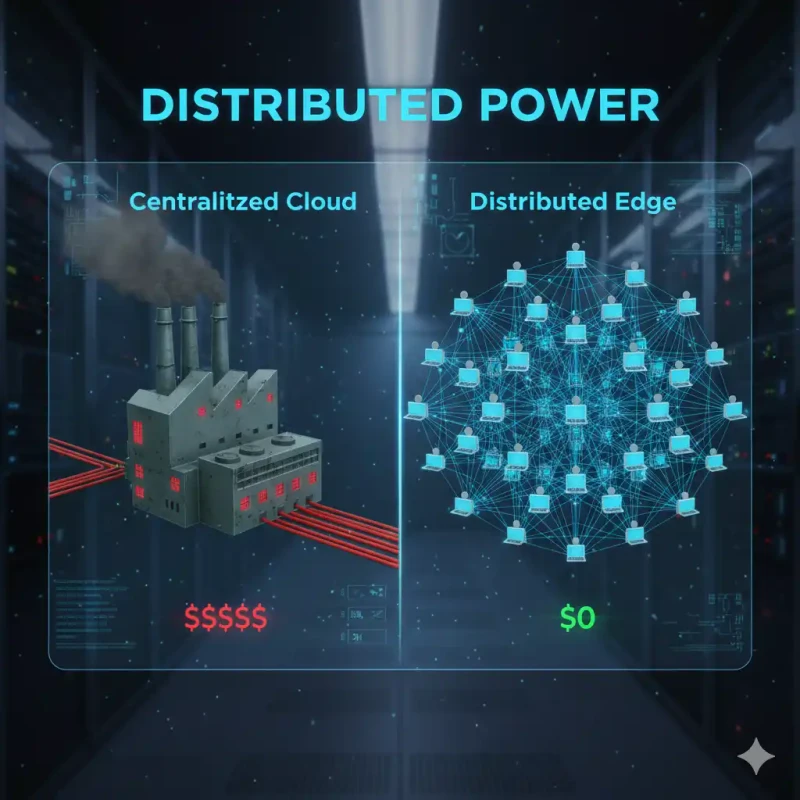

Part 5: Why 'Edge' is Safer than 'Cloud'

When you scan a suspicious matrimonial photo or a family clip on MojoDocs, the "Secure Enclave" of your phone acts as a shield. The browser's memory is volatile—as soon as you close the tab, all pixel data and interim AI results are permanently destroyed from the RAM. No 'Digital Trail' is left for hackers or cloud corporations to harvest.

Conclusion: The Future of Distributed Trust

In 2026, we are moving away from "Centralized Truth." We are entering the age of Personal Veracity. By putting the power of a forensic lab in your pocket, MojoDocs ensures that truth is not something you "subscribe" to—it's something you own.

The next time you receive a viral "forward," don't wait for a fact-checker. Use your phone's GPU. Verify the math. Reclaim the pixels.