That 'Free' tool isn't free. It's paid for by your data. A deep dive into the hidden economy of AI training datasets, India's DPDPA laws, and why Client-Side (Local) AI is the only safe way to edit sensitive documents.

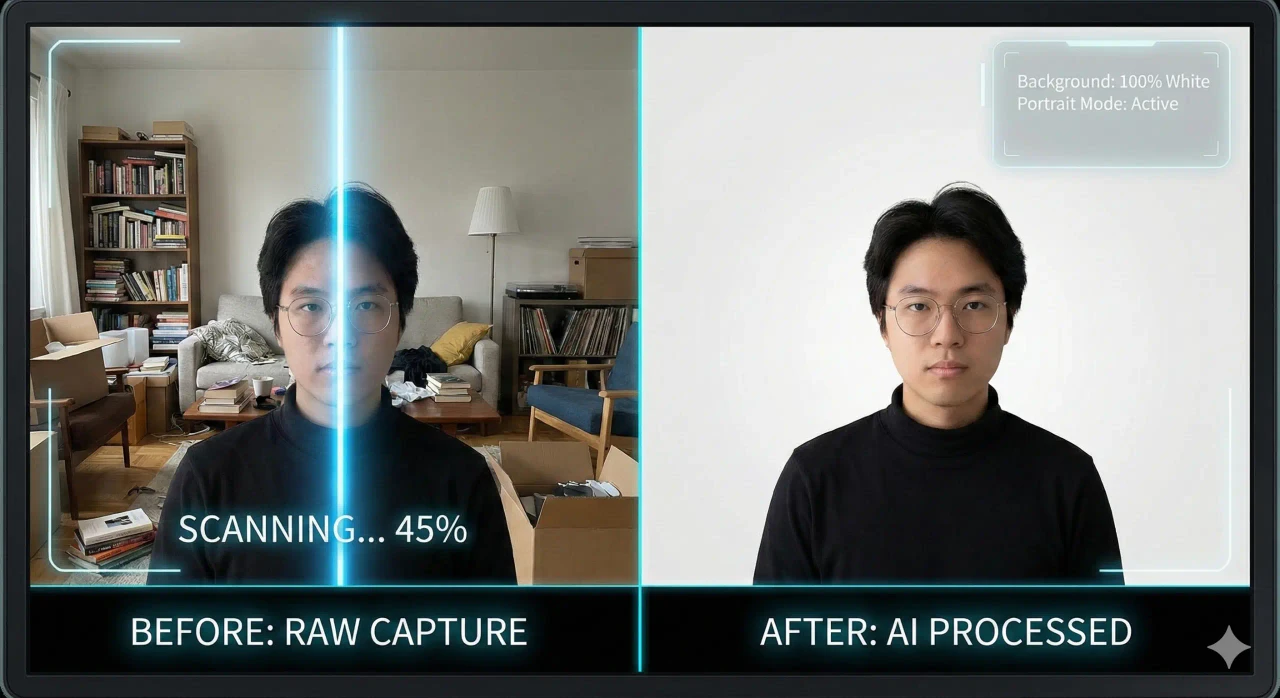

We all love the magic of AI. You upload a completely messy photo of your living room, click a single button, and poof—the background is gone, leaving a professional studio portrait. It feels like magic. It feels convenient. And best of all, it's free.

But as the old internet adage goes: "If you aren't paying for the product, YOU are the product."

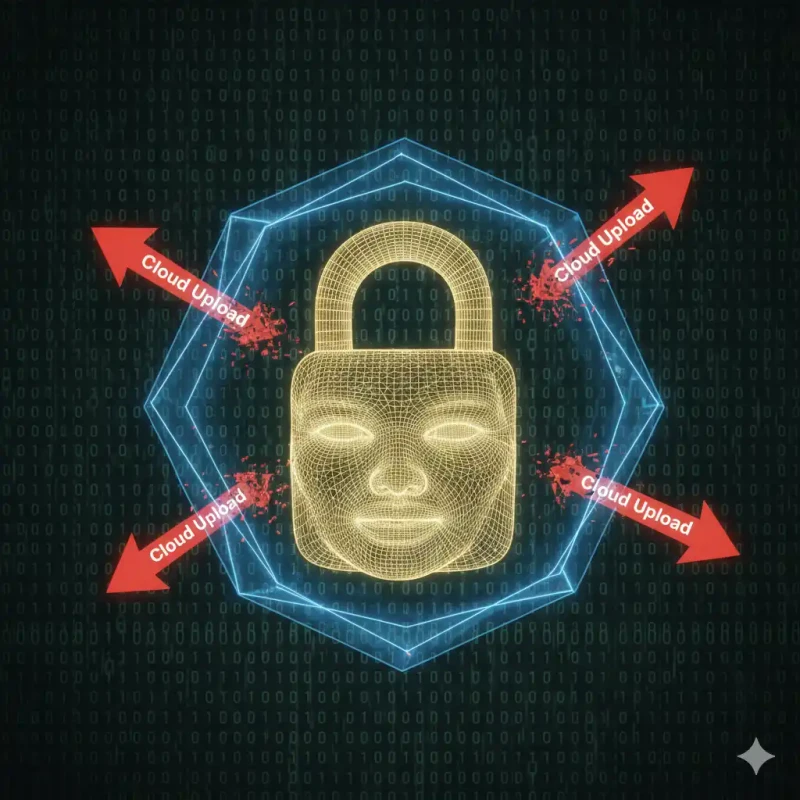

In 2026, creating high-quality AI models requires one thing above all else: Data. Specifically, "High-Quality, Labeled Data". And every time you upload your Passport photo, your child's birthday picture, or your company's unreleased product prototype to a "Free Background Remover," you are essentially volunteering to be a data point in a massive global experiment.

This article is a security warning. We will analyze the hidden terms of service of popular tools, explain the rising threat of "Deepfake Identity Theft" in India, and introduce the only architectural solution that guarantees privacy: Local Edge Computing.

Part 1: The Economics of "Free" AI Tools

Let's look at the business logic. Processing an image using a Deep Neural Network (like U-Net) requires heavy GPU computation. If you run this on Amazon Web Services (AWS) using NVIDIA A100 GPUs, it costs real money—approximately ₹0.50 to ₹1.00 per second of compute.

If a website allows 100,000 users to process images for free every day, their bill is in the lakhs. How do they survive?

1. The Freemium Trap

They give you a "Preview" sized image (500x500 pixels) for free, but charge you ₹20 per image for the HD download. This is honest. But many users mistakenly think the upload itself was private.

2. The "Data Training" Clause

This is the dangerous one. Many AI companies are desperate for real-world edge cases. They need to teach their AI how to distinguish "Hair" from "Fur", or "Glass" from "Air".

Your photos provide this training data. When you upload a photo of yourself, that image is stored, labeled, and fed into the neural network to make it smarter for the next paying customer.

The Risk: Once a neural network has "learned" your face, that mathematical representation exists forever. It cannot be deleted.

Part 2: Why This is Dangerous (The Indian Context)

You might say, "I don't care if they see my face." But in India, your face is your financial key.

The AePS (Aadhaar Enabled Payment System) Threat

Biometric fraud is rising in India. Scammers use leaked high-resolution photos to create "Deepfake Video KYCs" or arguably even spoof Face Auth systems.

When you edit your Passport Photo or PAN Card Signature on an insecure cloud tool:

- You are uploading a high-resolution, perfectly lit, front-facing biometric sample.

- If that database is breached (and they often are), your biometric identity is sold on the dark web.

- Unlike a password, you cannot change your face. A leak is permanent.

The Corporate Leak (NDA Violation)

Imagine you are a graphic designer working on a confidential ad campaign for a client. You use a cloud remover to isolate the product. You have just technically violated your NDA by sharing that asset with a third party (the tool provider).

Part 3: The Solution - "Local-First" Architecture

At MojoDocs, we realized that the only way to solve this was to change the architecture of the internet.

How It Works: Send the Chef, Not the Ingredients

Traditional (Cloud): You pack your ingredients (Photo) -> Ship them to a Restaurant (Server) -> Chef cooks -> Ships distinct back. Risk: The driver can eat your food.

Modern (MojoDocs): We ship the Chef (AI Model) -> To Your Kitchen (Browser) -> You cook. Risk: Zero. The food never leaves your house.

We use WebAssembly (WASM) and WebGL. This enables your chrome browser to run heavy C++ AI code directly. Your own Laptop/Phone CPU does the math.

Part 4: The Audit (Prove It Yourself)

Security should never be taken on faith. Verify it.

Verification Method 1: The "Flight Mode" Test

This is the simplest physical proof.

- Open MojoDocs Background Remover.

- Wait a few seconds for the model to load (Cache).

- Disconnect your Wifi / Turn off Mobile Data.

- Upload a photo.

- Result: It works perfectly. The background vanishes.

- Conclusion: It is physically impossible for the data to have left your device, because you had no internet. try this with Adobe Express or Canva—they will crash.

Verification Method 2: The Network Inspector (For Geeks)

- Right-click anywhere on MojoDocs -> "Inspect Element".

- Go to the "Network" tab.

- Upload your image.

- Look for "XHR" or "Fetch" requests.

- Result: You will see ZERO upload requests containing your image data. You might see a request to Google Analytics (for page views), but no binary image data leaving.

Part 5: India's Legal Landscape (DPDPA 2023)

The Digital Personal Data Protection Act, 2023 is now law in India. It places strict penalties on "Data Fiduciaries" (Companies) for mishandling user data.

However, it also emphasizes User Duty. If you voluntarily upload your data to a foreign server that is not compliant with Indian laws, you are taking a risk.

MojoDocs is Privacy by Design. Since we do not collect the data, we cannot leak the data. We are the safest tool for compliance-heavy industries like:

- FintTech / Banking Agents: Processing KYC documents.

- Healthcare: Doctors uploading patient photos for records.

- Government Services: Scanning docs for e-Office.

Conclusion: Convenience vs Security

For a long time, we had to choose: "Fast & Risky" (Cloud) or "Slow & Safe" (Offline Software). Technology has finally evolved.

With MojoDocs, you get the speed of AI with the safety of a bunker. In 2026, there is no excuse for uploading your face to a stranger's server.

The Golden Rule: If it's your face, your signature, or your child—keep it Local.