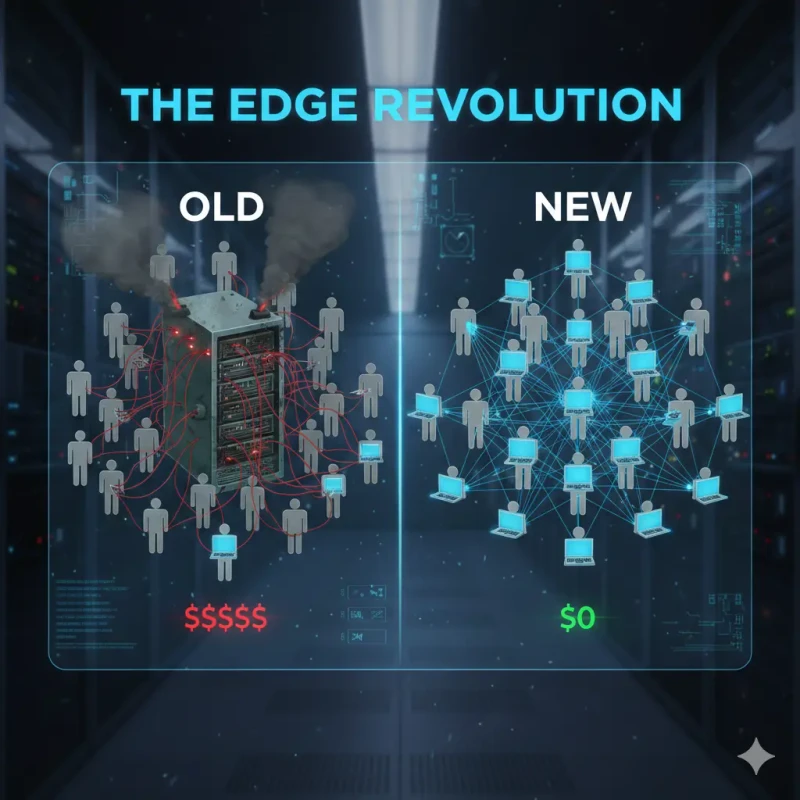

The Cloud Era is ending. With WebAssembly and Neural Engines, we are entering the era of 'Client-Side AI'. Learn why cutting the server cord reduces costs to zero and makes privacy the default.

For the last 15 years, the software industry has operated on a single, unquestioned dogma: "Put it in the Cloud."

The logic was sound in 2010. User devices were weak (slow Pentiums, early iPhones). Servers were powerful. So, the "Thin Client" model won. The browser was just a display; the "Brain" lived in an AWS data center in Virginia.

But in 2026, this logic is inverted. The average user's device is a supercomputer. An iPhone 16 has a 16-core Neural Engine capable of 35 Trillion Operations Per Second. A MacBook Air runs on Apple Silicon that rivals desktop workstations. Yet, we still upload a 5MB JPEG to a server 5,000 miles away just to remove a background?

This is inefficient. It is slow. It is expensive. And at MojoDocs, we believe it is over. We are betting the farm on Client-Side AI.

Part 1: The Economic Calculus (Who Pays?)

Let's look at the P&L (Profit & Loss) statement of a traditional AI company vs a Client-Side AI company.

The Cloud API Model (The "Remove.bg" Way)

Every time a user uploads an image:

- Ingress Cost: Bandwidth to receive 5MB.

- Compute Cost: An NVIDIA A100 GPU spins up to run inference (approx 200ms).

- Storage Cost: Saving the result.

- Egress Cost: Bandwidth to send it back.

Marginal Cost per User: > $0.00. (It scales linearly. More users = Higher bills).

The Client-Side Model (The "MojoDocs" Way)

Every time a user uploads an image:

- Compute Cost: $0 (The user's battery pays for it).

- Bandwidth Cost: $0 (No upload).

Marginal Cost per User: $0.00.

This creates an unassailable competitive advantage. We can offer unlimited HD processing for free, forever. Our competitors cannot match this without going bankrupt, because their architecture builds cost into every click.

Part 2: The Enabler Technology (WASM & WebGPU)

Why didn't we do this sooner? Because JavaScript is slow. JS is single-threaded and not designed for matrix multiplication (the math behind AI).

Enter WebAssembly (WASM).

WASM allows us to take high-performance code written in Rust or C++ and compile it into a binary format that modern browsers can execute at near-native speed. It's safe, sandboxed, and incredibly fast.

Enter WebGPU.

This is the successor to WebGL. It gives the browser direct access to the device's GPU (Graphics Card). This means we can run parallel compute shaders. Suddenly, running a Stable Diffusion or Segmentation model in Chrome isn't just possible—it's fast.

Part 3: Privacy as a "Moat"

Privacy is no longer just a regulatory hurdle; it is a feature.

In the Cloud era, "Privacy" was a promise. "We promise we encrypt your data at rest." "We promise we don't look at it." Trust was required.

In the Client-Side era, Trust is obsolete. The privacy is architectural. If the data never leaves the device, the service provider cannot define the privacy policy because they never hold the data.

The "Enterprise" Sale:

Imagine selling a tool to a Law Firm or a Defense Contractor.

Pitch A (Cloud): "Upload your secret contracts to our cloud. We are SOC2 compliant."

Pitch B (Local): "Process your secret contracts on your own air-gapped laptops. Nothing touches the internet."

Pitch B wins every time.

Part 4: The Green Computing Angle

We rarely talk about the carbon footprint of the internet. Training AI models emits tons of CO2. But Inference (using the model) is where the long-term impact lies.

Sending Exabytes of video and image data across trans-oceanic fiber optic cables to centralized data centers is incredibly energy-inefficient. It wastes electricity on routing, switching, and cooling.

Edge Computing is Green Computing. It uses the energy that has already been expended to charge the user's laptop. It minimizes network traffic. It is the most sustainable way to scale AI to billions of users.

Conclusion: The "Thick Client" Returns

History is a pendulum. We went from Mainframes (Central) to PCs (Local) to Cloud (Central). Now, we are swinging back to Local.

The next generation of unicorns won't just be SaaS (Software as a Service). They will be MaaS (Model as a Service)—delivering powerful intelligence that lives and breathes on your device.

MojoDocs is just the beginning. The browser is the new Operating System.